How Microsoft Research and AI Mindset Doubled Work Quality

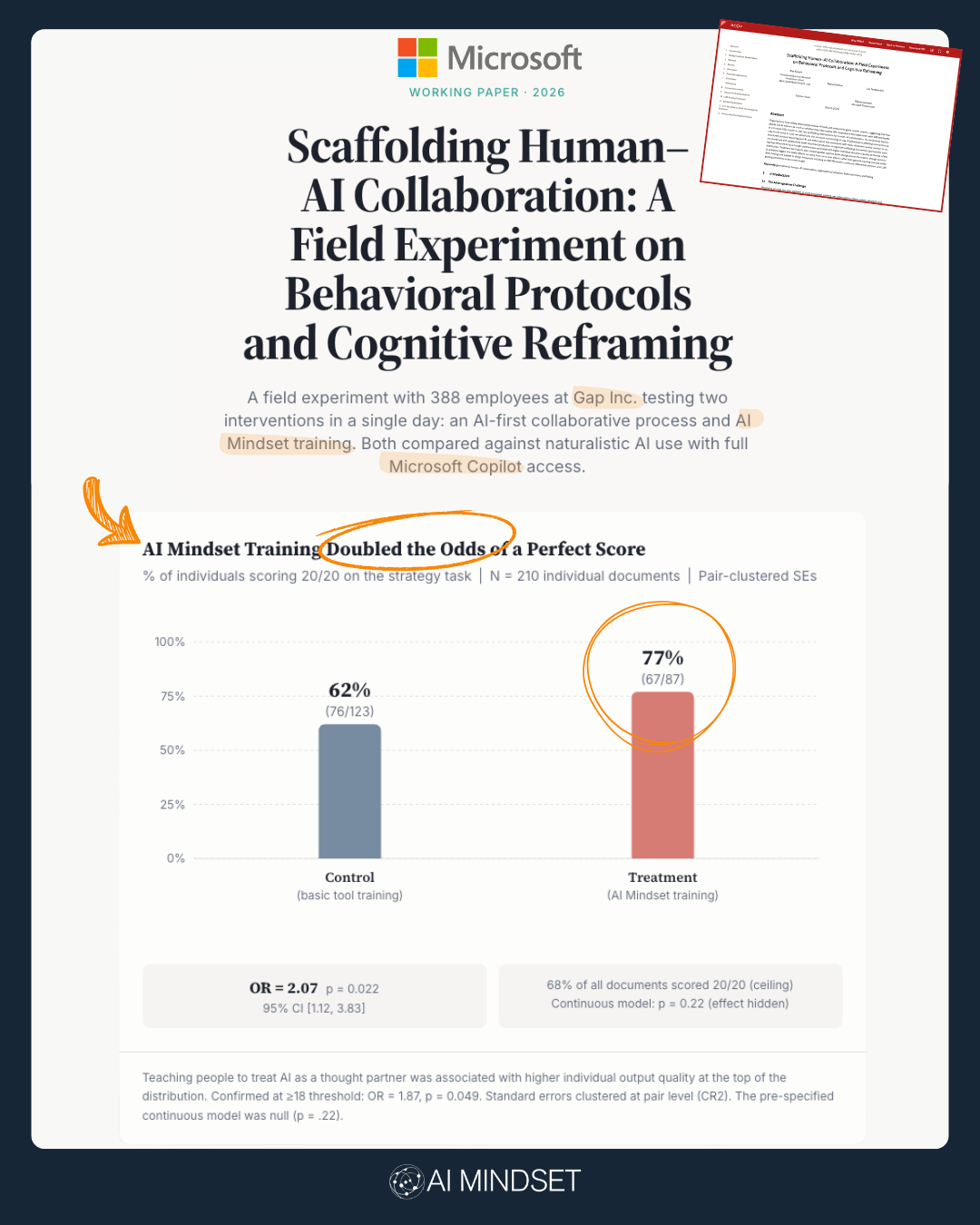

Microsoft Research partnered with Gap Inc. and my company, AI Mindset, on a controlled experiment last year to test one thing: Could our behavioral training improve how people use Copilot compared to standard AI training?

The answer: Yes. It literally doubled the quality of their output.

Not how they felt about AI. Not their confidence level.

The actual, measured quality of their work - graded blind by independent raters who had no idea who got which training.

Same tool. Same task. The only difference was the training.

If your company has rolled out Copilot - or any AI tool - ask yourself: would you like to double the quality of your people's work?

Let's dig in.

THE SETUP

Microsoft designed the experiment. They ran it with 388 employees at Gap Inc. Everyone got full access to Microsoft Copilot.

Then they split people into two groups.

The control group got standard, feature-focused AI training. You know the kind:

This is Copilot. Here are the best features. Here are some killer use cases. Here are some great prompts.

Sound familiar? That's what 99% of AI training looks like right now.

The AI Mindset group got our training. And here's what that looked like:

No feature demos. No killer use cases. No prompt tips.

Instead, we showed them how their brains were fundamentally wired wrong for AI.

WHY YOUR BRAIN IS WIRED WRONG FOR AI

AI Mindset has a unique approach. We start with the person, not the technology.

When you see a text box on a screen, your brain - instantly and subconsciously - treats it like Google. That's because your neural pathways have been shaped by two decades of search engines. Text box = type short query, get answer, leave.

That works great for Google.

It's catastrophic for AI.

Because Copilot and ChatGPT and Claude don't behave like Google. They behave like people. They're designed to be conversed with, pushed back on, iterated with. They're designed to think with you.

But your brain doesn't know that. Your brain just sees a text box and goes, "Oh, I know what to do here."

This is the central problem of enterprise AI adoption. It's not a technology problem, or a features problem or even a learning problem. It's a behavioral problem.

And you cannot fix a behavioral problem by teaching features.

WHAT WE ACTUALLY DID

In 30 minutes, we didn't tell people to change their behavior. We showed them how to.

We demonstrated, in real time, how they were instinctively treating AI like a search engine. We showed them why they were doing it - the actual neuroscience of why their brain defaults to that pattern.

And then we showed them what it looks like to think with AI instead.

That's the shift. Not more prompts. Not more features. A completely different way of engaging with the tool.

And Microsoft measured it.

THE RESULTS

77% of the AI Mindset group produced top-quality work.

47% of the standard training group did.

Double the odds of producing excellent output, from one 30-minute session. Graded blind by independent raters.

Let me say what that means in plain English: if you have 100 people using AI, standard training gets you about 47 people doing great work. AI Mindset training gets you 77. That's 30 more people clearing the bar. From one session.

AI News of the Week

Meta’s "Muse Spark" and the end of the open-source era?

Zuck just dropped Muse Spark, and it’s a massive pivot from the pure open-source LLaMA days toward a hybrid model with actual "Thinking" modes. It’s integrated everywhere from Instagram to your smart glasses, but benchmarks show it’s still playing catch-up to the top-tier frontier models in abstract reasoning. It’s Meta’s "re-entry" moment, but it feels like they’re finally realizing that scale alone doesn’t win the reasoning race.

The Sam Altman Profile: 100 interviews and one blockbuster investigation.

The New Yorker just released a massive deep-dive on Sam Altman, and it’s... a lot. From secret memos to HR documents, it paints a picture of a career-long pattern of deception that’s got the whole industry talking. Sam’s calling it "incendiary," but when your own board and the Farrow/Marantz duo are pointing at the same Slack logs, the "trust" conversation isn't going away anytime soon.

OpenAI’s New Cybersecurity Guard Dog.

While everyone is gossiping about IPOs, OpenAI quietly rolled out a specialized cybersecurity AI to a select group of partners. This isn't just GPT-4 with a hoodie on; it’s an autonomous security analyzer built specifically to hunt for vulnerabilities. It’s the first real sign that the industry is moving from "general chat" to "purpose-built agentic experts" that can actually handle high-stakes technical heavy lifting. Source: Dev.to

WHY THIS MATTERS FOR LEADERS

Your AI tool is not the bottleneck. Microsoft - the company that makes Copilot - just proved it. The tool didn't determine whether people did excellent work. The training did. Stop asking "which AI tool should we buy?" Start asking "how are we training people to use the one we already have?"

Feature training is not enough. If your AI rollout consists of showing people how Copilot works and handing them a prompt library, you are leaving enormous value on the table. Your people are using a fraction of what that tool can do - not because they don't know the features, but because their brains default to the wrong mental model every time they open it.

This scales. 30 minutes. One session. Doubled the quality of output. This isn't a year-long transformation program. It's a mental model shift that sticks.

THE FULL STUDY

Microsoft published the full academic paper on arXiv and built an interactive data explorer to walk through the findings. If you're a leader at a company that's rolled out AI tools - or is about to - take a look.

The full paper: arxiv.org/abs/2604.08678

Interactive data explorer: microsoft.github.io/ai-mindset-experiment/

More soon, friends.